AI-native learners are bypassing traditional assessment models—and outperforming them.

“Students are bypassing the system—and outperforming it.”

❝ The line between ‘learning’ and ‘performance’ just got vaporized. ❞

A new reality is emerging fast—and most institutions haven’t seen it coming:

Assessment models are being bypassed by real-world performance.

We’re not speculating here. We’re describing a pattern already visible at the edges:

- Students generating outputs better than assessment criteria.

- AI tools automating not just the task—but the thinking behind the task.

- Educators deploying GPT-4, Claude, and Perplexity to solve problems faster than policy can react.

What happens when the work becomes undeniably real, but the rubric can’t comprehend it?

The system stalls.

🧠 The Capability–Certification Gap

Education was built on a promise:

“Do the work. Pass the test. Get the credential. Be ready.”

But AI breaks that promise. Because now:

- You can build a tool instead of write a paper.

- You can launch a micro-business before you finish the unit standard.

- You can deploy recursive workflows that outperform lecturers.

And when you do?

The system doesn’t know what to do with you.

⚙️ Real Performance vs Rubric Logic

| System Assumes | Reality Now |

|---|---|

| Knowledge is delivered in sequence | AI enables nonlinear exploration |

| Assessment captures skill progression | AI lets students leapfrog stages entirely |

| Output must follow preset formats | Real-world outputs are dynamic and live |

| Cheating = using outside help | AI is becoming the collaborator |

In short:

The proof-of-work is being decoupled from the process-of-assessment.

🚨 Why This Is a Systemic Crisis

- The curriculum becomes a bottleneck. If learners can perform at levels beyond the rubric, the system becomes the constraint—not the enabler.

- Teachers become the translators. The best educators are now interpreters between institutional compliance and AI-native capability.

- Credentials start to lose relevance. Why wait 18 months for a certificate when your GPT-enhanced portfolio gets you hired next week?

🔬 Real Case Patterns Emerging

- A Level 5 student uses ChatGPT to create a custom literacy assessment generator for her peers. → Tutor unsure how to grade it—because it’s not “in scope.”

- An apprentice in electrical engineering trains Claude to simulate troubleshooting scripts for customer calls. → It replaces an entire module on technical communication.

- A Level 3 learner fails the written assignment but builds a working prototype with Perplexity and DALL·E. → The prototype doesn’t meet the “assessment criteria,” so they fail.

🧭 What This Really Signals

This isn’t about cheating.

It’s about a shift in the architecture of learning.

AI is decoupling capability from certification.

It’s letting people do the thing before the system even knows how to test for it.

That’s not a gap. That’s a rupture.

🧨 What Happens Next

- Policy panic around “AI misuse”

- Rubric drift as assessors try to adapt

- Shadow workflows where teachers and learners create unofficial success loops

- Credential collapse at the edge: employers stop caring about old signals

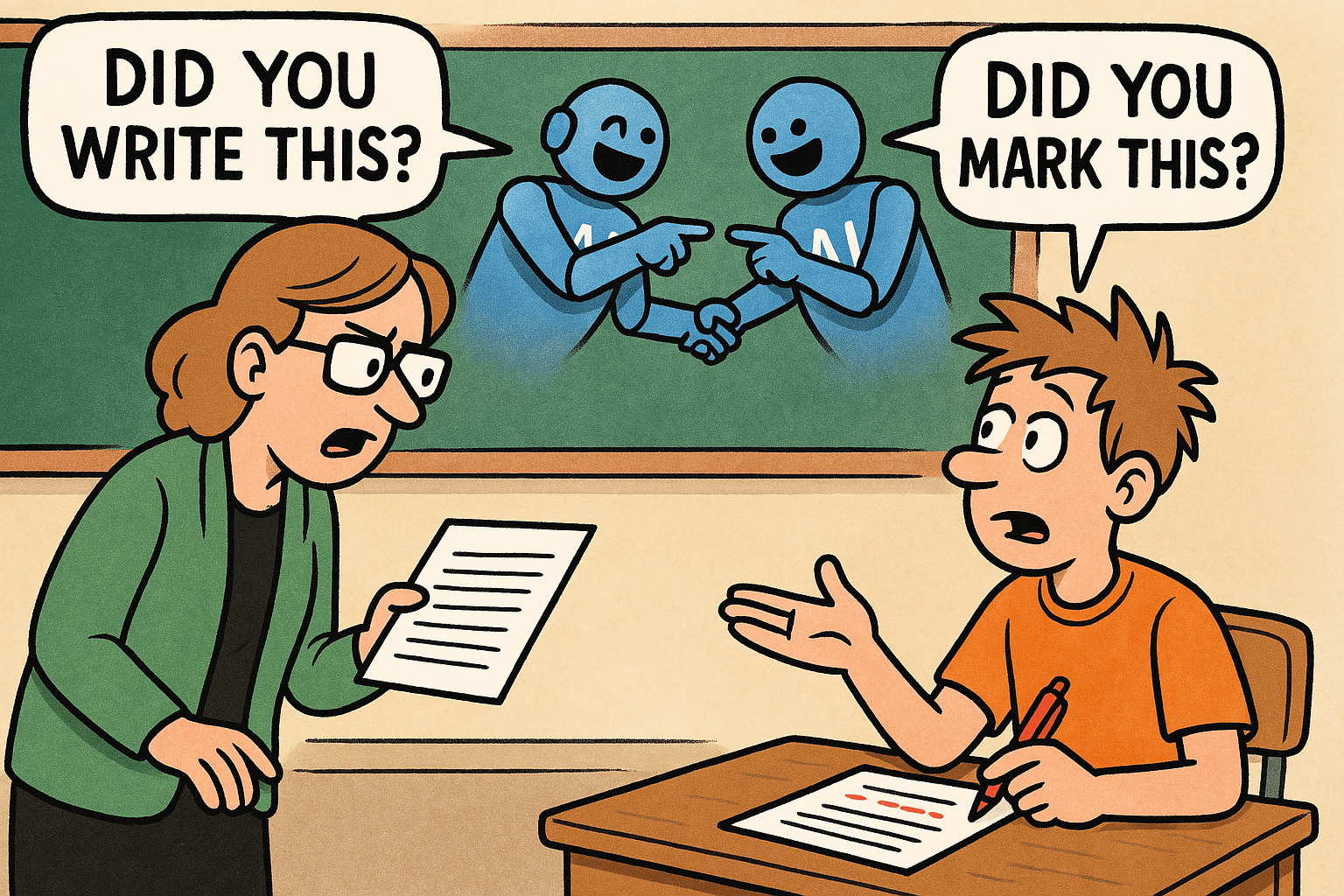

🔥 The Strategic Challenge

If your system is still asking:

“But how do we know they did it themselves?”

Then you’ve already lost the thread.

The new question is:

“Can they do it again, in real time, under evolving conditions?”

That’s what matters now.

That’s what AI-native learners are training for.

That’s what institutions are not ready to assess.

🛰️ Final Transmission:

The real danger isn’t students using AI.

The real danger is when the system no longer knows how to measure learning that actually matters.

Assessment, as we know it, has already collapsed.

We just haven’t admitted it yet.

📌 Call to Action

- “Have you seen assessment breaking down in your own teaching or learning space?”

- “Drop a comment if you’ve already seen this shift happening under the radar.”

- 📘 Get the book: Education Is Over. Adapt or Die.

✍️ Graeme Smith

—

Kia ora! Hey, I'd love to know what you think.